![[The logo of the Austrian Research Institute for Artificial Intelligence]](https://punderstanding.ofai.at/theme/images/OFAI-logo-OFAI-standard-plainsvg.svg)

Dr. Tristan Miller

Austrian Research Institute for Artificial Intelligence

Vienna, Austria

Creative language, such as humour and wordplay, is all around us: every day we are amused by clever advertising slogans; our televisions and cinemas play an endless string of eloquent comedies; and literary critics write volumes on the wit of contemporary and classic authors. The ubiquity of creative language, and the constant need for creative professionals to analyze and translate it, would seem to make it a prime candidate for automatic language processing techniques such as machine translation. However, computers have tremendous difficulties in processing the vagaries of creative language. This is because they view anomalies, incongruities, and ambiguities in the input as things that must be resolved in favour of a single “correct” interpretation, rather than preserved and interpreted in their own right. But if computers cannot translate creative language on their own, can they at least provide specialized support to creative professionals, such as human translators of humour and wordplay?

The translation of wordplay is one of the most extensively researched problems in translation studies, but until now it has attracted little attention in the fields of artificial intelligence and language technology. In Computational Pun-derstanding, we will study how professional translators process wordplay, with particular attention to the tools, knowledge sources, and working processes they employ. We will then decompose these processes and look for parts that can be modelled computationally as part of an interactive, computer-assisted translation system. With this “machine-in-the-loop” paradigm, language technology will be applied only to those subtasks it can perform best, such as searching a large vocabulary space for translation candidates matching certain phonetic and semantic constraints. Subtasks that depend heavily on real-world background knowledge—such as selecting the candidate that best fits the wider humorous context—will be left to the human translator. To fulfill this ambitious vision, it will be necessary to develop innovative, interactive techniques for identifying instances of wordplay, interpreting and exploring their semantics, and generating target-language candidates that best preserve the ambiguity and humorousness of the original.

The project's scientific innovation lies in its connection of hitherto separate channels of research: linguistic theories of humour, computational representations and analyses of word meanings, manual translation of wordplay, and computer-assisted translation technologies. Besides providing new insights into the linguistic processes and translation strategies for wordplay, the research has the potential to significantly ease the burdens borne by professional translators in the processing of creative language, fostering creative solutions to unorthodox translation problems.

![[The logo of the Austrian Research Institute for Artificial Intelligence]](https://punderstanding.ofai.at/theme/images/OFAI-logo-OFAI-standard-plainsvg.svg)

Dr. Tristan Miller

Austrian Research Institute for Artificial Intelligence

Vienna, Austria

![[The logo of the Austrian Research Institute for Artificial Intelligence]](https://punderstanding.ofai.at/theme/images/OFAI-logo-OFAI-standard-plainsvg.svg)

Máté Lajkó

Austrian Research Institute for Artificial Intelligence

Vienna, Austria

![[The logo of the University of Vienna]](https://punderstanding.ofai.at/theme/images/Uni_wien_siegel.svg)

Prof. Gerhard Budin

Centre for Translation Studies

University of Vienna, Austria

![[The logo of Texas A & M University–Commerce]](https://punderstanding.ofai.at/theme/images/Texas_AM_University-Commerce_seal.svg)

Prof. Christian F. Hempelmann

Ontological Semantic Technology Laboratory

Texas A&M University–Commerce, USA

![[The logo of the Austrian Science Fund (FWF)]](https://punderstanding.ofai.at/theme/images/fwf-logo_vektor_var2.svg)

Austrian Science Fund (FWF)

Project number: M 2625-N31

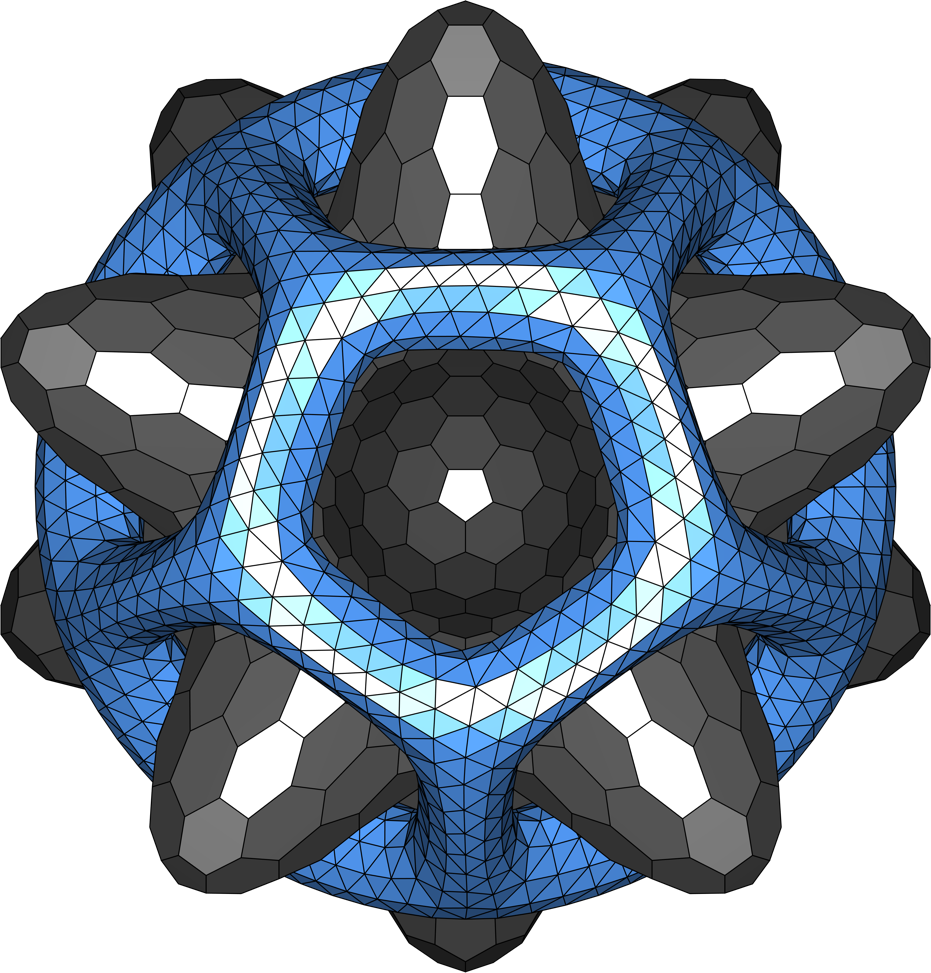

Computational analysis of wordplay

Wordplay translation track previewed

CLEF proceedings now out

Tech for creative-text translation

Aggressive humour; humour & AI

Wordplay translation track previewed

Anna Palmann on pun phonology

New directions in NLP for humour

Invited talk in Valencia

Style-conditioned poetry generation

Automatic humour translation

HCI in pun translation

ISHS 2021 webinar series

Tech for creative-text translation

HCI in pun translation

Interview in 1E9 magazine

Dr. Miller commended by EACL

Learning with disagreements

Do machines have a sense of humour?

HCI in pun translation

A palindromic plagiarism puzzle

GPP, a generic preprocessor

New language tech developer

Summer internship in language tech

Predicting humour on Twitter

Memorial articles published

On requirements of translating humour

Panel series returns to ISHS

"Computer verstehen keinen Spaß"

How computers can help comedians

Translatio ex machina

Dr. Miller commended by EMNLP–IJCNLP

The Punster's Amanuensis

Predicting humour on Twitter

Interpreting wordplay computationally

Learning to rank the humorousness of jokes

On the future of automatic humour translation

Controlling style in machine-generated poetry

Detecting visual humour by caption analysis